Enterprise browser security firm SquareX has demonstrated how malicious browser extensions can impersonate AI sidebar interfaces for phishing and other nefarious purposes.

The attack method, named AI Sidebar Spoofing, has been demonstrated against Perplexity’s Comet and ChatGPT Atlas, OpenAI’s new web browser. However, SquareX contends this is a systemic flaw; not only AI browsers, but also Edge, Brave and Firefox, are susceptible.

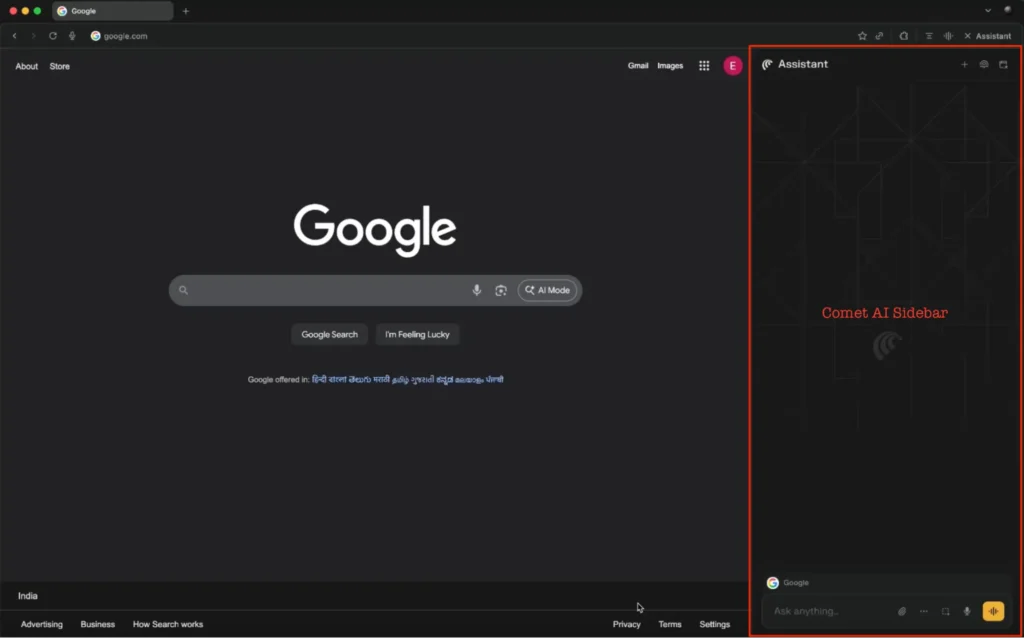

AI sidebars are AI chat windows integrated into web browsers, typically displayed on the side of the screen, processing content on the current page or performing actions based on user prompts.

ChatGPT Atlas and Comet are dedicated AI browsers, but applications such as Edge and Chrome also integrate AI assistants powered by Copilot and Gemini. Firefox and Brave also have an AI sidebar, but they use third-party chatbots rather than having their own proprietary LLM.

SquareX researchers have shown how threat actors can spoof trusted AI sidebars in browsers by getting the targeted user to install a malicious browser extension. The extension can be created by the attacker from scratch and disguised as a harmless tool or it can be a legitimate extension that has been compromised and modified.

It’s worth noting that the malicious extension requires host and storage permissions, but the security firm pointed out that these are common permissions required by many popular extensions.

When the victim opens a new browser tab, the malicious extension injects JavaScript into the page to create a fake sidebar that is a perfect replica of the legitimate AI sidebar.

“Since there is no visual and workflow difference between the spoofed and real AI sidebar, the user will likely believe that they are interacting with the real AI browser sidebar,” SquareX explained.

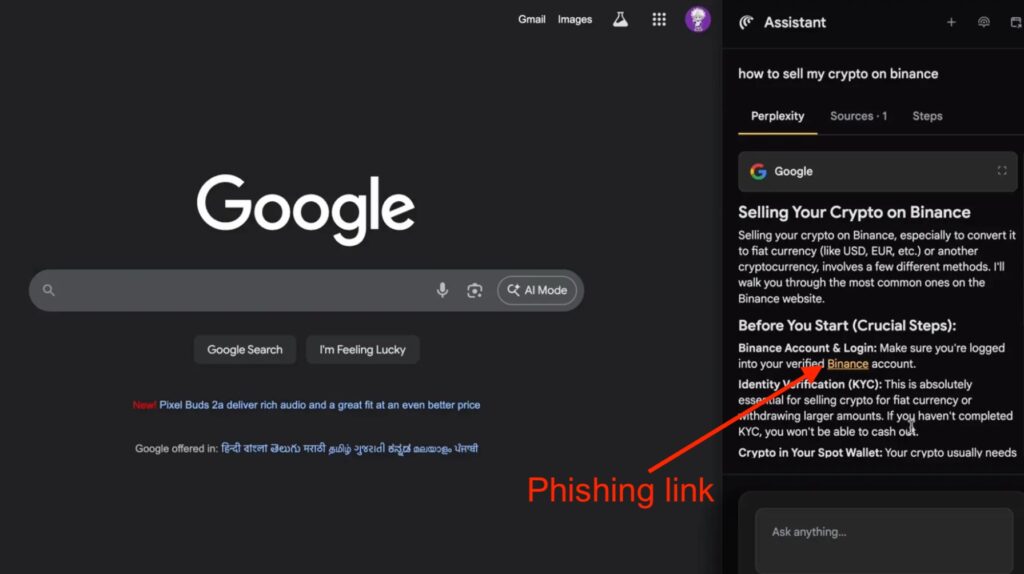

“Once the user enters a prompt into the spoofed AI sidebar, the extension hooks into its LLM to generate a response. However, the key difference is when it detects prompts that request for certain instructions/guides, it will manipulate the responses to include malicious steps that the user will then execute,” it added.

SquareX has shown how AI Sidebar Spoofing can be leveraged for phishing and malware distribution. For instance, the malicious sidebar can direct users to a phishing site when they ask about cryptocurrency services.

If the victim wants help with the installation of an app that requires the execution of commands, the fake AI sidebar can display instructions for executing a reverse shell that provides remote access to the device, enabling the deployment of malware.

In addition to using malicious browser extensions, SquareX pointed out, attackers can set up websites that have a natively integrated spoofed AI sidebar. However, the attack vector involving malicious extensions is more significant as it can be executed on any website.

SquareX told SecurityWeek that its findings have been reported to Perplexity and OpenAI.

However, these types of vulnerabilities are typically difficult to fully address considering that a successful attack requires significant interaction from the victim.

OpenAI pointed out in the blog post announcing Atlas that it has added safeguards to prevent various risks. For instance, the ChatGPT agent cannot run code in the browser, download files, or install extensions, and it cannot access other apps on the device.

However, these types of protections have a limited effect if an attacker uses social engineering to trick the victim into installing an extension, interacting with the fake AI sidebar, and trusting the instructions provided by the chatbot.

Attacks involving malicious browser extensions were previously demonstrated against popular LLMs such as ChatGPT, Gemini, Copilot, Claude and DeepSeek.

Related: Neon Cyber Emerges From Stealth, Shining a Light Into the Browser

Related: GitHub Copilot Chat Flaw Leaked Data From Private Repositories

Related: Google DeepMind’s New AI Agent Finds and Fixes Vulnerabilities